UK regulator Ofcom has published a discussion paper exploring the different tools and techniques that tech firms can use to help users identify deepfake AI-generated videos.

The paper explores the merits of four ‘attribution measures’: watermarking, provenance metadata, AI labels, and context annotations.

.jpg)

These four measures are designed to provide information about how AI-generated content has been created, and – in some cases – can indicate whether the content is accurate or misleading.

This comes as new Ofcom research reveals that 85% of adults support online platforms attaching AI labels to content, although only one in three (34%) have ever seen one. Deepfakes have been used for financial scams, to depict people in non-consensual sexual imagery and to spread disinformation about politicians.

The discussion paper is a follow-up to Ofcom’s first Deepfake Defences paper, published last July.

The paper includes eight key takeaways to guide industry, government and researchers:

- Evidence shows that attribution measures can help users to engage with content more critically, when deployed with care and proper testing.

- Users should not be left to identify deepfakes on their own, and platforms should avoid placing the full burden on individuals to detect misleading content.

- Striking the right balance between simplicity and detail is crucial when communicating information about AI to users.

- Attribution measures need to accommodate content that is neither wholly real nor entirely synthetic, communicating how AI has been used to create content and not just whether it has been used.

- Attribution measures can be susceptible to removal and manipulation. Ofcom’s technical tests show that watermarks can often be stripped from content following basic edits.

- Greater standardisation across individual attribution measures could boost the efficacy and take-up of these measures.

- The pace of change means it would be unwise to make sweeping claims about attribution measures.

- Attribution measures should be used in combination with other interventions, from AI classifiers and reporting mechanisms, to tackle the greatest range of deepfakes.

Ofcom said the research will also inform its policy development and supervision of regulated services under the Online Safety Act.

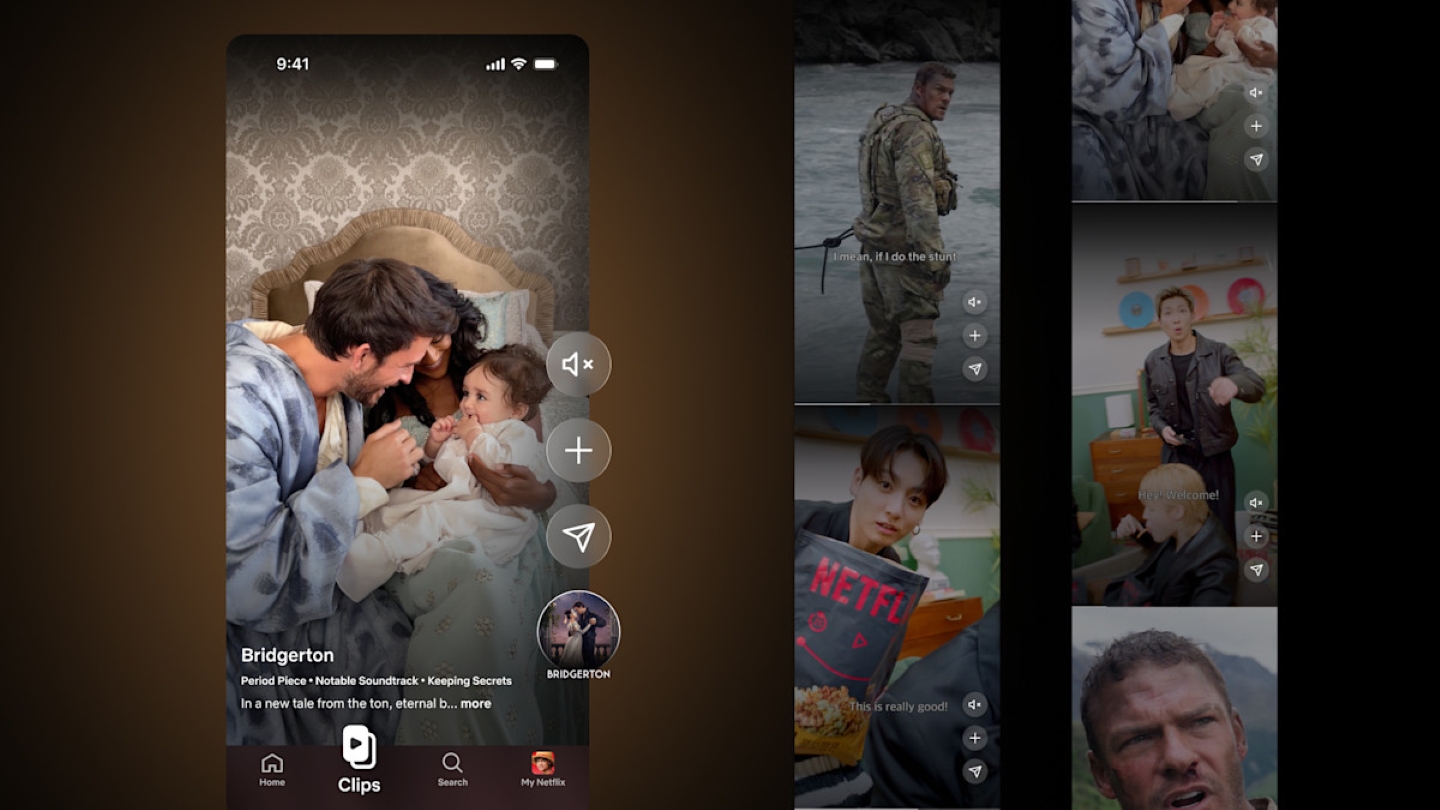

Netflix launches Clips vertical video feed

Netflix is revamping its mobile app, introducing a vertical video feed called Clips intended to help users discover new content.

UK screen industry must invest more in mid-level professionals, ScreenSkills reports

The UK screen sector needs to invest in mid-level specialists to stay competitive, according to a ScreenSkills report published this week.

2026 sees sharp increase in credential-based attacks, MPA data reveals

The Motion Picture Association’s content security initiative TPN issued more security alerts in the first quarter of 2026 than in all of 2025, according to its latest cybersecurity data.

FCC orders early review of Disney’s TV licenses after Trump comments

The Federal Communications Commission has ordered The Walt Disney Company, American Broadcasting Company, and television subsidiaries to file early license renewal applications for their television stations.

Adolescence and The Celebrity Traitors lead winners of Bafta TV Craft Awards

Adolescence and The Celebrity Traitors led the winners for this year’s Bafta Television Craft Awards, taking home two prizes each.

.jpg)